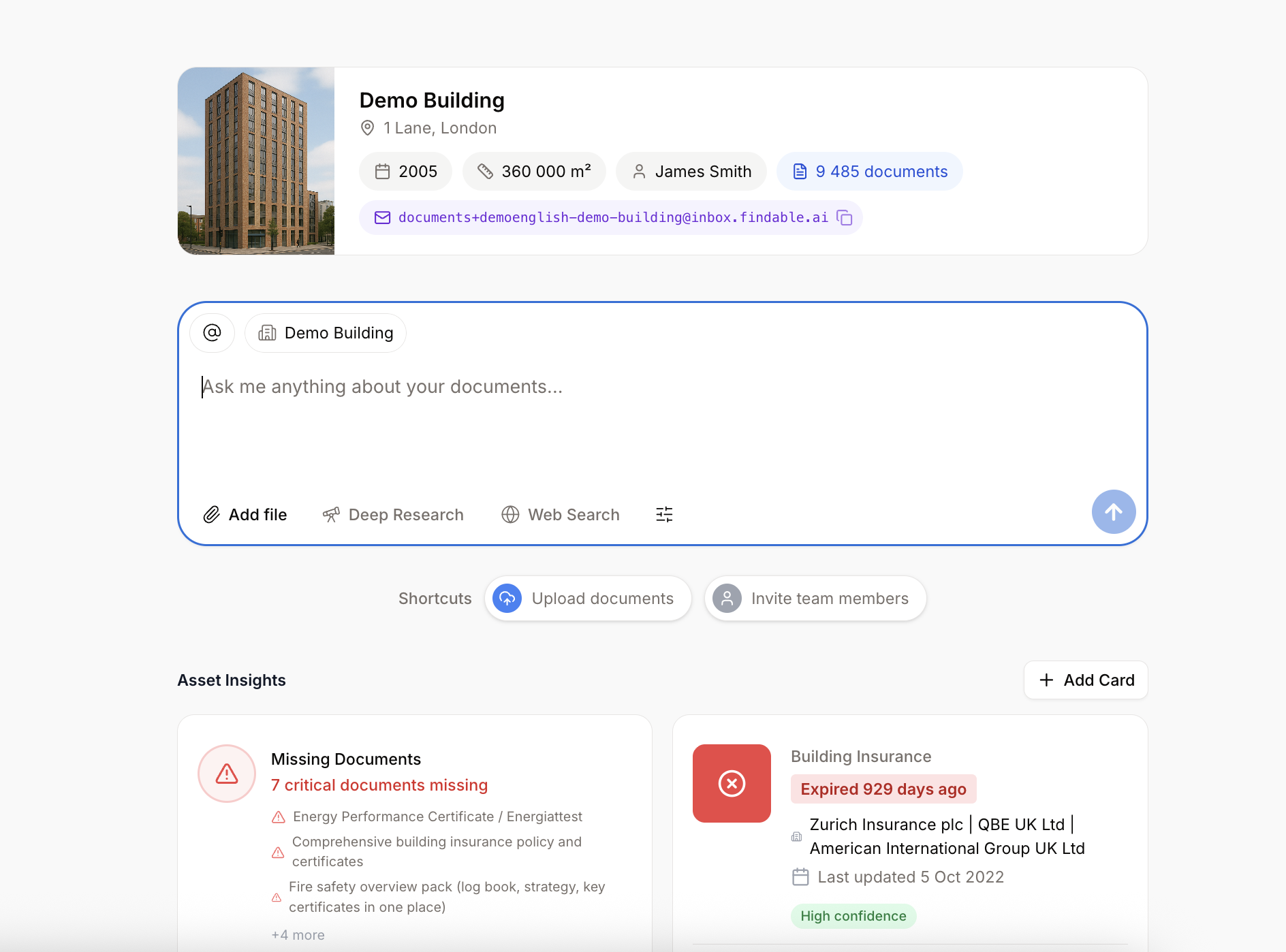

Findable is a Norwegian AI company transforming how the real estate industry manages building documentation. Their AI-powered Building Intelligence Platform helps asset managers and property owners classify, search, and organize millions of building documents, turning scattered files into actionable intelligence. Founded in 2020 and backed by Point Nine, KOMPAS, Construct Venture, and Lake Eight in a €9M Series A round, Findable serves more than 150 property organizations across Norway and the UK, including JLL, Colliers, and Knight Frank.

At the core of Findable’s platform is a document processing pipeline that handles OCR, classification, extraction, and indexing at scale. As enterprise customers onboard with entire corpora of building documentation, the infrastructure behind this pipeline needs to scale rapidly and cost-efficiently. After outgrowing their initial AWS serverless architecture, Findable turned to Cloudfleet to run their workloads across multiple cloud providers and power the next chapter of their growth.

Challenge

As Findable scaled into larger enterprise accounts, the business faced two pressures that their original infrastructure could not absorb. First, customers in the real estate sector handle sensitive building data and impose strict requirements on where that data is stored and processed, often demanding European jurisdictions and clear data sovereignty guarantees. Second, the unit economics of AI document processing had to improve dramatically for Findable to keep margins healthy as enterprise contracts brought ever larger document corpora into the pipeline.

Findable originally built their document processing pipeline on AWS using a serverless architecture with ECS Fargate, Lambda, SQS queues, and EventBridge for orchestration. While this setup was easy to bootstrap, it became increasingly complex and expensive as the company scaled, and tied the team to a single provider with limited control over where workloads ran.

The core issues were clear:

- High compute costs: Fargate and Lambda pricing per vCPU-hour is significantly higher compared to standard VMs, and document processing workloads are compute-intensive by nature

- Limited data control: Running exclusively on AWS made it difficult to meet customer demands for European data residency and provider diversity

- Operational complexity: Managing state across stateless functions required a growing web of SQS queues and EventBridge rules, making the system harder to reason about and debug

- Slow deployments: AWS CDK rebuilt all Docker images on every deployment, causing development environment builds to exceed one hour

As Findable onboarded larger enterprise customers, each bringing thousands of building documents, the spiky nature of the workload amplified these challenges. The team needed infrastructure that could handle rapid scale-up during onboarding, keep costs predictable during steady-state operation, and give them the flexibility to place workloads on providers that matched their customers’ data protection requirements.

Solution

Findable migrated their AI document processing pipeline to Cloudfleet, replacing their AWS-only serverless setup with a multi-cloud Kubernetes architecture spanning Hetzner and Exoscale. The migration replaced not just the compute layer, but fundamentally improved how workloads are orchestrated and deployed.

The key architectural changes included replacing SQS and Lambda-based orchestration with Temporal, a durable workflow engine that runs natively on Kubernetes. With Temporal, Findable gained built-in fault tolerance: if a worker node fails mid-OCR, Temporal simply replays the execution on a new node without data loss. The team also gained full visibility into their pipeline, being able to see exactly where a specific document is in processing without inspecting message queues.

Infrastructure-as-code moved from AWS CDK to Pulumi, giving the team more flexibility with TypeScript and Python. CI/CD pipelines were optimized to build and deploy only changed components, and deployments shifted to direct Kubernetes rollouts on Cloudfleet. Because Cloudfleet exposes a standard Kubernetes API across every cloud provider, rolling out a new version is now a matter of updating a deployment manifest rather than rebuilding container images and reconfiguring serverless functions through CDK. This is what cuts deployment times from over an hour to under five minutes: the slow CDK image rebuild cycle is replaced with incremental container builds and a fast rollout against a cluster that Cloudfleet manages end-to-end.

Cloudfleet’s node auto-provisioning handles the elastic scaling that Findable’s workload demands. When large onboarding jobs hit the pipeline, Cloudfleet automatically provisions additional nodes across providers to meet demand. During quiet periods, infrastructure scales down to minimize costs. This gives Findable the elasticity they previously relied on serverless for, but at a fraction of the price.

While compute moved to Hetzner and Exoscale, Findable continues to use AWS services like S3 for storage. Cloudfleet’s built-in cloud API integrations make this seamless and secure, allowing workloads to access AWS services without storing or rotating credentials, eliminating a common source of security risk.

The migration was completed in weeks rather than months, with AI-assisted tooling helping port Lambda handlers and SQS consumers to Temporal Activities and Workers. The first workflow was running on the new infrastructure within days.

Results

The impact was immediate and dramatic. In the first month after migration, Findable handled almost twice the document processing workload compared to the previous month, at a fifth of the cost.

"In January, we handled almost twice the workload we handled in December at a fifth of the cost."

Knut Hellan, CTO & Co-founder, FindableDeveloper experience improved just as significantly. Deploying to development environments now takes less than five minutes, down from over an hour with the previous AWS CDK setup. Production rollouts became a matter of restarting pods, giving the team fast and granular control over releases.

Beyond cost and speed, the migration gave Findable better operational visibility. With Temporal managing workflow state, the team can track every document through the pipeline stages (OCR, classification, extraction) without the complexity of debugging distributed message queues. If a node fails, work is automatically retried without data loss.

Today, Findable runs workloads across Hetzner and Exoscale within a single Cloudfleet cluster. Cost-intensive document processing runs on Hetzner for cost efficiency, while critical infrastructure services run in parallel on a separate cloud provider to ensure high availability. If one provider experiences an outage, Findable’s operations continue uninterrupted. With Cloudfleet’s unified control plane, managing workloads across multiple cloud providers requires no additional operational complexity.

By combining multi-cloud resilience with cost-efficient compute, Findable now has an infrastructure foundation that scales with their business. As they continue to expand across Norway and the UK, serving an ever-growing number of property organizations, Cloudfleet provides the elasticity, cost efficiency, and reliability their AI workloads demand.